Technology

Apr 03, 2019

Zero-Downtime Rolling Deployments With Netflix’s Eureka and Zuul

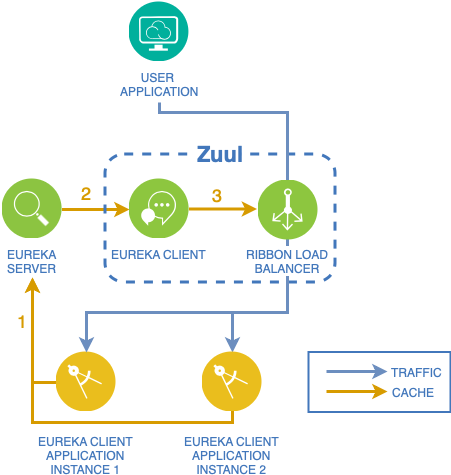

If you’ve ever used Spring Cloud or are familiar with JHipster then you’ve likely heard of Eureka and Zuul. Eureka and Zuul work in tandem to provide service discovery and load-balanced API routing.

A typical use case for Eureka/Zuul would be a microservice architecture that requires high availability. Zuul acts as the API gateway, providing a uniform, single point of entry into the set of microservices, while Eureka is essentially used as a “meta data” transport. Each client application instance (read microservice) registers its instance information (location, port, etc.) with the Eureka server. Then Zuul fetches all instance metadata from Eureka to build out its routing table. This allows for multiple instances of the same client application to be deployed, which Zuul can load balance between.

In the absence of a blue/green deployment strategy, we would likely resort to something called a rolling deployment to ensure a zero-downtime deployment. With a rolling deployment, typically there are multiple instances of an application fronted by a load balancer. When we need to deploy a new version of the application, we first disable an instance of the previous version in the load balancer and then shut down the instance. Then we deploy and start an instance of the new version. Once the instance has successfully started, we re-enable the instance in the load balancer before repeating the same process with the rest of the old versioned instances. With this strategy, traffic is temporarily diverted around the down instance so it can be updated without causing an outage. For simplicity’s sake, we will assume an environment consists of a single application with two instances, although these methods will scale with larger environments (just imagine two groups of any size).

To accomplish a rolling deployment with Eureka and Zuul, we need to understand how to remove an instance from Eureka’s registry, and also how to add an instance to Eureka’s registry, all while ensuring Zuul does not forward requests to unavailable instances.

Basic Default Operation

The order of operations for adding an application instance is as follows:

Application is deployed and started.

The DiscoveryClient bean in the application registers with the Eureka server with status

UP.Zuul retrieves the application information (registry) from the Eureka server.

Zuul forwards eligible traffic to the newly deployed instance.

Likewise, the process for removing an application instance is as follows:

Application is shut down.

Shutdown hook in application sends

DOWNstatus to the Eureka server.Zuul retrieves registry data from the Eureka server.

Zuul no longer forwards requests to that instance.

The basic process outlined above will effectively add and remove an instance, but we need to ensure that no service outage was introduced in the process. If the listed actions could be processed within a single, atomic operation, then there wouldn’t be an issue. Unfortunately, that is not the case.

What if there is an inbound request after step 2, but before step 3?

During the shutdown process, the client application is no longer taking requests, but Zuul doesn’t know that yet. If Zuul attempts to forward a request to this instance, it will result in a connection error and respond with a 500 to the caller of the API. Not ideal.

During the startup process, the client application is running and capable of taking requests, but again, Zuul doesn’t know that, and will not forward requests to it. This obviously isn’t as big of a concern as shutdowns, especially considering there are likely other instances of the same client application that Zuul is aware of that can fulfill requests. However, this does raise the question…

When will Zuul start sending requests to a newly registered application instance?

It depends. To answer that question, we must first understand how Eureka and Zuul use caching during the propagation of information from one Eureka client to another (remember that Zuul itself is a Eureka client since it uses Eureka to gather the Eureka registry). For the sake of this illustration, we will view Zuul as its two parts: the Eureka client and the Ribbon load balancer.

There are three caches we must consider:

The Eureka Server Response cache – Any call made to the

/eureka/appsendpoint will use this cache. This means that although a client application may have registered with Eureka, Eureka will not share that information with other clients until the Server Response cache is updated. By default, it is refreshed every 30 seconds.Eureka Client cache – Each Eureka Client will cache a copy of the Eureka registry information. It too is refreshed every 30 seconds by default.

Ribbon Load Balancer cache – The load balancer embedded in Zuul also has a cache. This cache is populated by querying the Eureka Client cache. It is also refreshed every 30 seconds.

To answer the question of when will Zuul route requests to newly registered instances, we must consider the worst-case scenario; that is, when all the cache refreshes align in such a way that we must wait the entire refresh period for each cache. In that case, the upper bound of our waiting period would be 30 + 30 + 30 seconds.

It could take up to 90 seconds before Zuul begins sending requests to newly registered client application instances.

The Problem…

Simply put, with the default configuration a Eureka Client application instance cannot be shut down without potentially introducing downtime In the fast-approaching world of Continuous Delivery, where we should be able to reliably deploy at any time of the day, this issue poses a significant problem.

The Solution…

Waiting. I know, it is far from a groundbreaking solution, but Eureka and Zuul do not provide a way to evict and refresh its caches, so our options are limited. We could tweak the cache settings to have them refresh more frequently, but that doesn’t eliminate the issue, it only reduces it.

By simply introducing waiting periods throughout the deployment process, we can ensure that Zuul does not forward requests to unavailable instances. But where should we introduce these waiting periods?

When adding a new instance the waiting period begins once the application has started and registered with Eureka. When removing an existing instance the waiting period must take place the instance has de-registered from Eureka, but the instance stops accepting requests. This is a bit more complicated and could be accomplished in two ways:

1. Via spring-boot-actuator

Spring Boot provides a few endpoints in its actuator package that we can leverage to accomplish this, specifically the/pauseand/shutdown endpoints. APOSTto the/pause endpoint will notify Eureka that this instance status isDOWN, effectively removing that instance from the load balancer (pending cache updates, of course). We can use these endpoints to define a shutdown process that ensures zero downtime: call/pause, wait 90 seconds, then call /shutdown.

2. Via Eureka API

If actuator is not an option, the Eureka server provides a mechanism to override the status reported by individual application instances. Instead of calling the/pause endpoint, we do aPUT to the/eureka/v2/apps/appId/instanceId/status?value=OUT_OF_SERVICEendpoint on the Eureka server. This shutdown process would be similar to the one above: update a single instance’s status toOUT_OF_SERVICE, wait 90 seconds, then shutdown the application.

Note: Be aware that this requires each instance to have a unique instance ID!

Putting It All Together

Using what we’ve learned, we can construct an overall deployment process that will ensure zero-downtime:

De-register the first instance from the Eureka server.

Wait 90 seconds.

Shut down, deploy, and start the first instance.

Wait 90 seconds.

De-register the second instance from the Eureka server.

Wait 90 seconds.

Shut down, deploy, and start the second instance.

Traffic will begin flowing to the second instance within 90 seconds.

Eliminating Wait Periods With Retry Logic

The above applies with the default Zuul configuration, but what if we add retry logic to Zuul itself? Could we eliminate the need for all the waiting periods? Well no, but we can eliminate some of them!

If we can configure Zuul to retry a failed forward to an available instance, then there is no need for a waiting period during the removal of an application instance from Eureka’s registry. However, this doesn’t mean we can perform a rolling deployment with no waiting periods at all. Since it still takes 90 seconds for Zuul to be aware of a new instance, we must ensure that we don’t start deploying the second instance before Zuul is aware the first one is available. With retry logic, our deployment process becomes:

Shutdown, deploy, and start the first instance. Wait for registration with Eureka.

Wait 90 seconds.

Shutdown and deploy the second instance.

Traffic will begin flowing to the second instance within 90 seconds.

Our original deployment time of six minutes is cut in half to three minutes with retry logic.

Adding Retry Logic

Luckily for us, Zuul already supports retry logic, so we just need to configure it. Add the following dependency to yourpom.xml:

org.springframework.retry spring-retry

and in yourapplication.yml:

zuul.retryable: true

Rolling Deployment, But You Have to Wait

Performing a rolling deployment of Eureka clients is possible, but it will require some type of waiting period. Retry logic at the Zuul layer can help reduce the waiting, but will not eliminate it completely. If your goal is an ultra-fast, flick-of-a-switch type deployment, then look elsewhere. But for most, what Eureka and Zuul provide should be sufficient.

Contact Us

Ready to achieve your vision? We're here to help.

We'd love to start a conversation. Fill out the form and we'll connect you with the right person.

Searching for a new career?

View job openings