Technology

May 21, 2018

The Great Credera Bake-Off: Virtual Reality / Augmented Reality Edition

At Credera, we have to stay sharp on a variety of innovative and cutting-edge technologies to make sure we’re able to serve our clients’ needs and offer an educated point of view on questions they might have. We do this in many ways, from formal training to side projects, hack-a-thons, and meetups. In this spirit, we informally formed VR Club to explore virtual reality (VR), augmented reality (AR), and mixed reality (MR) technologies. It was also a good excuse to grab free pizza and hang out with some friends.

Recently, we were forwarded the following email, asking for our thoughts on a potential new VR/AR project:

Inquiry for help from a friend of the firm

Needless to say, despite the brief description, we were excited for the opportunity to explore what might be possible in the space. Even though it was not a “real project” yet, we wanted to see what we could do. So, over pizza, our VR Club challenged each other to a good ol’ fashioned “bake off” to see who could come up with the best solution. After all, competition breeds innovation, right?

The Goals:

To produce the best AR or VR proof of concept related to home renovation that could help set the direction for a production-ready implementation.

The Rules:

We set four rules for our bake off:

A max of 24 hours could be spent on concepts. We have day jobs, after all, and we didn’t want to drain the team

Solutions had to have real-world potential. This wasn’t a real project yet, but it could be soon—how could we anticipate and bridge the “trough of disillusionment”?

Solutions must cast vision. The best prototype would help cast the vision for how the real thing might work.

Solutions must be technically feasible. Any technology limitations or clever workarounds must be documented; solutions had to be both believable and awesome.

Taylor Pickens

What was the concept?

I wanted to create an AR app that would allow users to place virtual decorative items, such as sinks, lamps, tables, etc. onto surfaces in the real world.

What was the inspiration?

With most of my experience being in VR using Unity and my Playstation VR, I had to break out of my comfort zone and explore the completely new world of AR for this project. I found that Android’s ARCore libraries were supported by Unity and there were some ready-made demos that could be compiled onto my phone and tested with ease.

Initial Plane detection and model placement

What went well?

The Unity library was surprisingly solid and easy to get working. ARCore’s plane detection appeared to work extremely well straight out of the box. The demo app I found allows you to click and place little droid bots on horizontal surfaces. After digging into the source code, I found that the library handles all of the heavy lifting and provides you with a horizontal plane you can use within Unity. I replaced the android with a 3D sink model I found online for the prototype and was able to test correct scaling and placement of the object.

What went poorly?

The major limitation we ran into was that all of the main AR libraries are not yet capable of detecting vertical surfaces. This means no easy placement of objects on or painting of walls. When testing the app on other devices, we found that some of the dependencies for the AR libraries were not present and had to be downloaded separately. We also discovered that the occlusion challenge is real (when an object, like a hand, comes between your phone camera and the virtual kitchen sink). Though this is hardly a deal breaker for our use case of a mom envisioning her new kitchen. Finally, on newer phones like Pixel 2 XL, the plane detection is impressive—almost instantaneous. However, older devices would take several seconds to compute and build out the lattice of the plane. It was also a bit trippy that the default light source for the 3D models did not align with where our actual lights were.

What did we learn?

The basics worked well! But there were significant rough edges that would still take time to polish to a professional level. The most encouraging thing was that though we had to do a bit of manual dependency management to package things up nicely, we were able to get libraries from different vendors to work reliably together on multiple platforms.

What would we do next time?

The biggest thing we did right was quickly evaluating our options before picking a foundational set of libraries and sticking with them. This space is moving so fast and there are a lot of immature options out there still. So having a framework that took care of the basics limited the amount of work we had to do to get something we could demo.

Zachary Slayter

What was the concept?

I saw an early version of Taylor’s concept and wanted to experiment more with model manipulation and getting high-fidelity models in addition to exploring other platforms so we could compare strengths and weaknesses.

What was the inspiration?

I have had experience developing for Oculus’ DK2 back when consumer VR wasn’t a thing, as well as developing for the GearVR, and the HTC Vive in more recent years. AR development, on the other hand, was new to me for this bake off. I was very excited to see how I could place virtual items in the real world, and manipulate them in 3D space. My discoveries built on the lessons from Taylor’s demo, while also providing experience in different technologies.

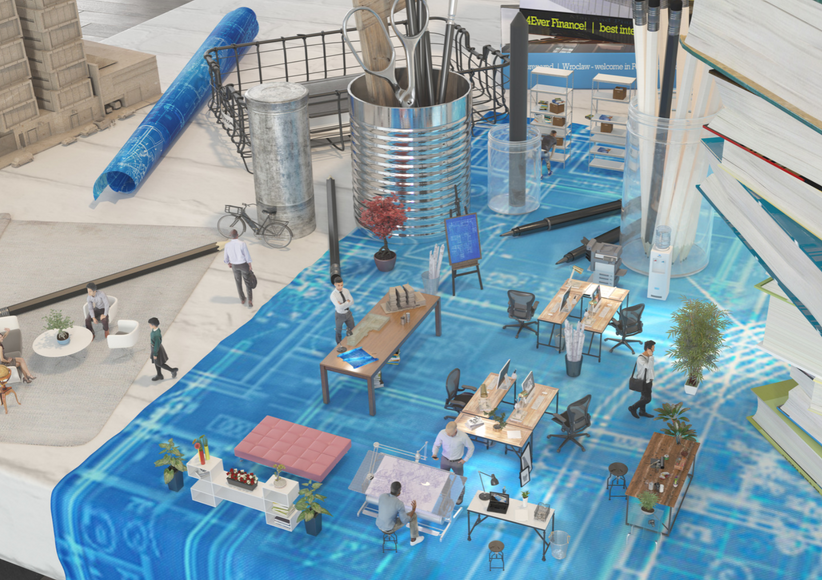

Moving and manipulating models in real-time

What went well?

We found yet another open-source project (MIT License), but this one leveraged the power of Apple’s ARKit, provided a basic user interface, and exposed some services with which we could manipulate 3D objects in space. The interface design was also more user-centric and less developer-centric. We were able to get higher-fidelity 3D models in place, which helped with the realism. Furthermore, we did manage to build out a workflow for automatically importing different types of 3D models, which opened us up to a larger collection of assets. Again, with this iteration, we were able to prove the two new “big rocks” of rearranging furniture and fixtures in real space.

What went poorly?

We still have some issues with occlusion and lighting, as well as issues with models drifting if you are moving the device too much. Additionally, acquiring high quality models and importing them into the app proved to be the most time consuming task during development.

What did we learn?

Many of the root issues such as occlusion and model creation are inherent to the technology space. When building customer-facing applications, these things must be viewed as design constraints and worked around, otherwise you will be fighting industry-breaking problems and have a new technology startup on your hands.

What would we do next time?

At this stage, the libraries and frameworks are able to do much of the heavy lifting, so planning for a heavy focus on high-quality 3D art and models should be a priority.

Jon Williamson

What was the concept?

Our prompt mentioned comparing flooring and wall colors, so I wanted to figure out basic texture mapping on horizontal and vertical surfaces.

What was the inspiration?

It turns out that neither Android or iOS AR frameworks provide out-of-the-box vertical plane detection yet, but I found a demo by @bjarnel on Github which provided an example of how to allow users to place virtual “walls” in a scene to block off that area from virtual balls bouncing around in AR. I was able to take that idea and use it to “mark off” a floor region for placing a new texture.

Mapping out a floor layout and changing the texture

What did we learn?

We learned that vertical plane detection is not supported by the major AR frameworks at this point. However, the beta version of Apple’s ARKit does offer this support. We also learned that the more you cover the screen with computer-generated graphics, the more difficult it is for the phone to maintain accurate positional tracking. This would have to be overcome to keep the digital textures from moving out of position.

What went well?

Working forward from that demo, we decided to code a proof-of-concept for changing the virtual flooring for a room (say a bathroom or kitchen). By allowing users to place their own walls in the AR space, we could fill in the gap between with a new floor. We found a hardwood flooring texture online and spliced it together with the ARKit-occlusion demo.

What went poorly?

We quickly realized that the vertical plane mapping technique employed in the demo was sometimes glitchy, and the planes would move around after being placed. We also began to realize the importance of lighting on floor textures to help with more realistic depth perception.

What would we do next time?

Moving forward, our next steps would be to provide a user interface to allow customers to choose different flooring types (think hardwood, laminate, tile, carpet) and compare them. We would also enable the application of colors/textures to the virtual walls to help customers apply different paint colors or even wallpaper.

Conclusion

It’s pretty awesome what you can build in just 24 hours with all of the open source libraries in the community! That being said, the AR/VR/MR space is still green and has lots of real-world challenges that must be solved to address even simple use-cases. Still, with some cleverness and grit, the challenges can be overcome and you can build useful, mind-bending things to enhance your users’ realities.

As for the Great Credera Bake Off: AR Edition, we achieved our two goals of learning a ton and proving that we could quickly and efficiently build viable VR/AR/MR proofs-of-concept. Most importantly, we had fun along the way!

If you have questions on VR or AR, feel free reach out to us at marketing@credera.com.

Contact Us

Ready to achieve your vision? We're here to help.

We'd love to start a conversation. Fill out the form and we'll connect you with the right person.

Searching for a new career?

View job openings