Technology

Mar 25, 2013

Iterating Towards Excellence – Part 2: Test-Driven Development

There are many software engineering practices your team can undertake while iterating towards excellence. One of the most fundamental practices is automated testing. In its simplest form, automated testing allows for the codification of user requirements. Your user informs you about what features and functionality are needed, and you write test code that ensures the features and functionality are implemented as specified. The advantages (and disadvantages) of automated testing are not the primary purpose of this blog. Instead, we will focus on the nuts and bolts of how to engage in test-driven development.

Test-driven development is a simple approach to implementing automated tests, and more generally, to designing and implementing high-quality, naturally testable code. It consists of three steps that are repeated until a feature is fully implemented. These steps are commonly referenced as “Red/Green/Refactor.”

1. RED: Write a test that describes how a function ought to work. Running the test at this point will result in failure since you haven’t written any production code to actually implement the function.

2. GREEN: Write *just enough* code to make the test pass. Typically the code is not aesthetically pleasing at this point, which leads to the next step.

3. REFACTOR: Examine the code just implemented and make changes so it conforms to your team’s best practices. This should change the implementation, but not the behavior, thus keeping the test “GREEN.”

Sounds easy, right? It is. Let’s now walk through an example.

WARNING! Code snippets are shown beyond this point. The implementation style used is based on what our team has adopted as standards for our project. Your standards could be different, and that’s perfectly fine. Try to look past the semantics and to the essence of what’s going on. Feel free to critique the style – we appreciate feedback!

Example

Suppose we are working on a project where we are required to know when the next business day falls on any given date. After pouring over our existing code base and the Internet, we realize that we will have to write it. Our tools include Visual Studio 2012 and NUnit.

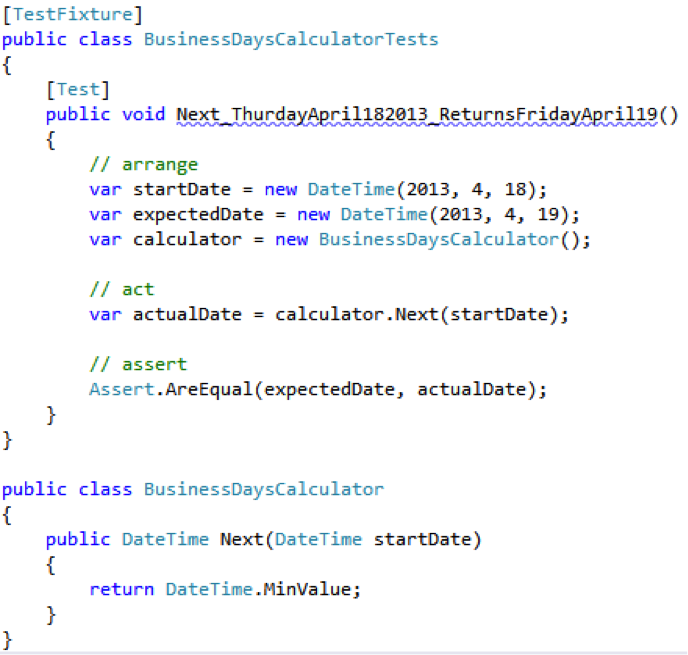

Let’s start with a simple test. Given a random Thursday, the next business day ought to be Friday. First, our RED step:

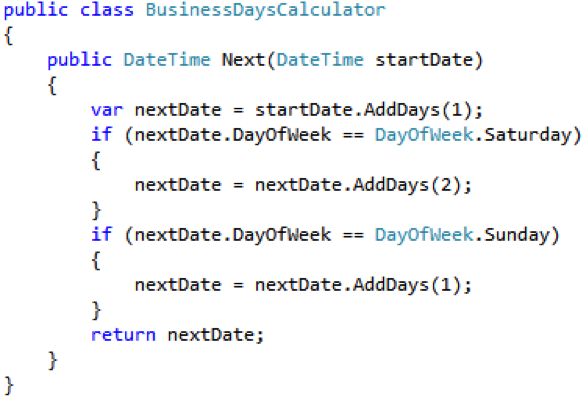

At this point, our solution won’t compile. We haven’t created the BusinessDaysCalculator class yet. Create the class along with a stub for the Next() method. For reference, we would typically create the domain class right inside the source file of the test class. This is convenient for editing until the domain class is fleshed out.

Now the code compiles. We run the test, and it fails as expected. Here are the results:

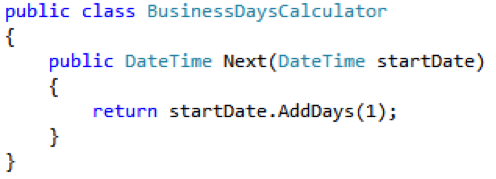

We’re RED, so let’s implement the simplest possible solution that will make it GREEN.

We run the test again and it passes.

At this point, we look to see if there is an opportunity to REFACTOR something; however, we will not find anything. You may think to yourself, ‘that’s the dumbest, most naive implementation ever!’ and it is probably overly simplistic. However, keep in mind that we have only implemented a single scenario. This will change later, but not until we have a test that tells us how it ought to behave given other circumstances.

You will be tempted to change the implementation to something more momentous, but resist the temptation. Let the principles of KISS (Keep It Simple, Stupid!) and YAGNI (You Ain’t Gonna Need It) be your guide. One of the critiques we often hear from code reviewers (and business owners) is that the implementation is “too complicated” or “took too long because it’s full of gold plating.” Both are legitimate perspectives and concerns that can be addressed if you adhere to the discipline needed to implement the simplest possible thing that makes the test GREEN.

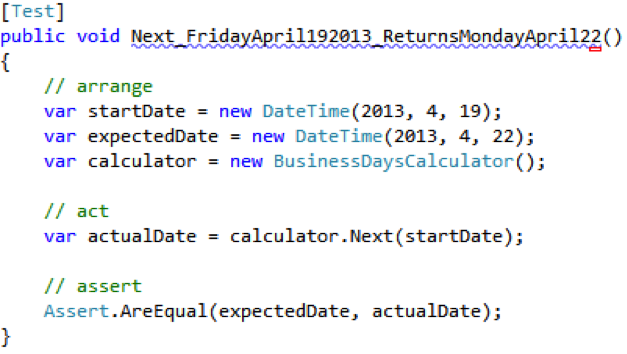

Let’s add a second test. Weekends are not considered business days, so given a random Friday, the next business day ought to be the following Monday. Our RED test follows:

…with some not so surprising failing results.

To make the test GREEN, we need to implement just enough code to get the calculator to respect the fact that weekends are not business days. The following code gets the job done:

Running the tests after this code is implemented gives us a GREEN result. Now that the tests are all GREEN, we look to see if there is an opportunity to REFACTOR. This time, we find a great candidate. The bit of code that handles offsetting the next business day by skipping over weekends should be a separate method. By performing the refactoring, we have:

Remember to run the tests after every change. The goal is to keep the tests GREEN, meaning the code is expressing the desired behavior while continuously improving the internal implementation.

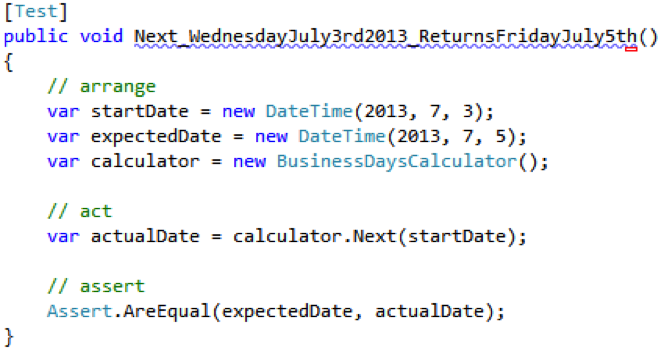

What happens with holidays? For instance, what is the next business day after July 3 in the U.S.? It would be July 5, assuming July 5 is a weekday. Our third test will cover this scenario:

The test fails with a message similar to the following:

What might our GREEN implementation be? It doesn’t take long to realize that our BusinessCalculator doesn’t know anything about holidays, and it probably won’t. This is a great time to go back to your product owner and ask about their definition of holidays. It could be that they have a centralized calendaring service of some kind and you will need to mock that service out. For our purposes, we will assume that the business owner has provided us with a list of holidays that will never change. The list only has one entry, July 4, 2013. How convenient!

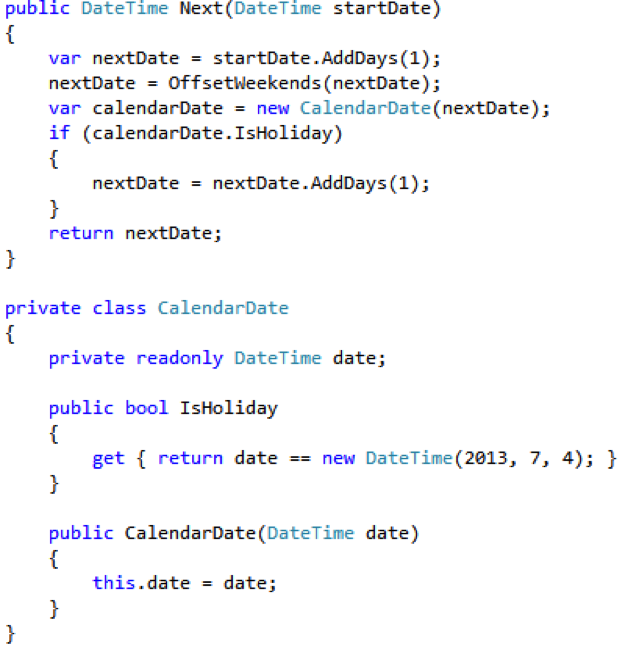

Our GREEN implementation might look like the following:

We run the tests again, and they all pass. There is one more REFACTOR to do to clean up the Next() implementation. We need to move the logic we just implemented to its own method.

With the final changes to the BusinessDaysCalculator, we have fully implemented the required functionality. We have also implemented automated tests to prove the calculator behaves the way we expect it to. However, we aren’t finished. Tests are code too, and should be subject to the same rigor with regard to standards as the rest of your code base. Look for duplication to consolidate, variables to name more meaningfully, etc.

As previously stated, this is just the tip of the iceberg for what can be accomplished through TDD and automated testing. Developers that would like to see a quick boost in the quality of their output would be well-served to spend time practicing the art of writing unit tests. They can also experience a productivity boost by utilizing test-driven development to ensure that they only code as much as is necessary to get the job done. However, this is only one of many practices that can be undertaken as you and your team continues on your journey of iterating towards excellence!

Stay tuned for Part 3 in this series where we will cover continuous integration. Click here if you missed Part 1. To get more great tips and keep up with the latest trends, technologies, interesting news, and thoughts on Microsoft-centric technology topics, follow @CrederaMSFT. If you have questions, we encourage you to join the TDD conversation by sending us a tweet.

Contact Us

Ready to achieve your vision? We're here to help.

We'd love to start a conversation. Fill out the form and we'll connect you with the right person.

Searching for a new career?

View job openings